Highlights

Top Insights

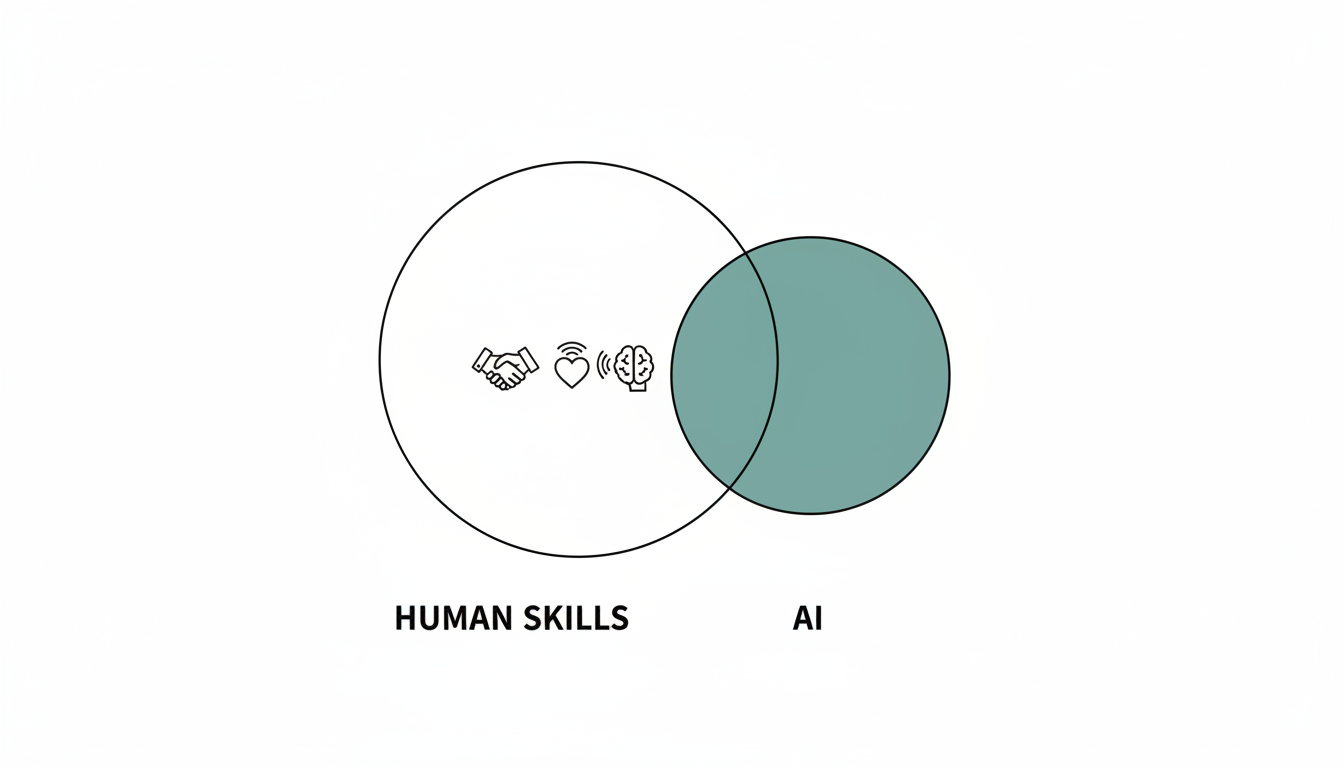

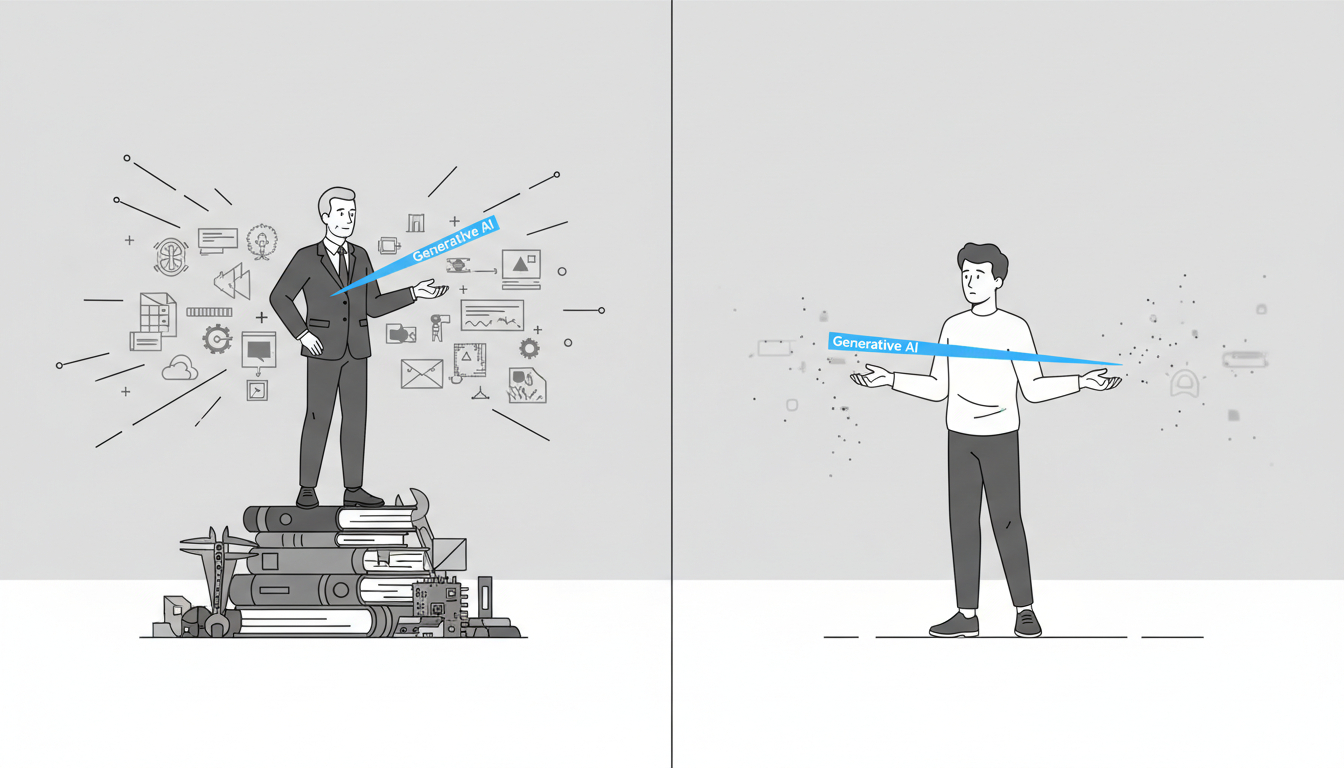

“57% of work hours are automatable” is not a job-loss forecast. This reflects technical potential, not that half of jobs vanish soon. Adoption takes decades, and the likely outcome is task-shifting inside jobs: roles shrink, expand, or morph, rather than mass elimination. So the big disruption is job redesign, not a simple jobs-gone story.

Most skills survive because they’re used in both automatable and non-automatable work. You might expect AI to make a bunch of skills obsolete. Instead, most skills employers want today show up in work that is partly automatable and partly not. That overlap means the skills don’t disappear; their context changes. Example: writing/research won’t vanish, but people do less first-drafting and info-gathering and more question-framing, judgment, and interpretation.

Source: Agents, robots, and us: Skill partnerships in the age of AI (McKinsey)

Top News

1. Claude Opus 4.5 is Anthropic’s new flagship model, launching across apps/API.

2. Fara-7B is Microsoft’s open-weight 7B-parameter computer-use agent trained on large-scale synthetic web-interaction trajectories.

3. ChatGPT’s new “shopping research” feature conversationally gathers your needs, searches trustworthy web sources, and delivers a personalized, cited buyer’s guide.

4. Perplexity launched a free AI-powered shopping experience that uses conversational, personalized discovery and seamless PayPal checkout.

5. Alibaba has launched its Quark AI Glasses S1 and G1 in China, integrating Qwen for hands-free everyday AI features.

Additional Insights

1. What’s next for AlphaFold: A conversation with a Google DeepMind Nobel laureate (MIT Technology Review)

John Jumper recounts how AlphaFold 2 rapidly solved the long-standing protein-folding challenge by leveraging transformer networks, fast iteration, and rich evolutionary/structural data, then surprised even its creators by being widely and responsibly adopted across biology. In five years AlphaFold evolved through Multimer and AlphaFold 3 and generated predicted structures for roughly 200 million proteins, enabling both expected uses (everyday lab guidance, faster hypothesis narrowing) and “off-label” breakthroughs like accelerating synthetic protein design, and using AlphaFold as a search engine to uncover unknown binding partners. Scientists now treat it as a powerful but imperfect tool (especially weaker on multi-protein dynamics) while a new ecosystem of startups and labs builds more drug-focused models (e.g., predicting ligand binding and pushing accuracy below one angstrom). Jumper stresses that structure prediction is only one piece of drug discovery, but the field is trying to make it a bigger, more integrated part of workflows. Looking ahead, he sees the next frontier as combining AlphaFold’s deep, precise structural skill with the broad reasoning and literature-reading abilities of large language models, expecting LLMs to increasingly drive scientific discovery, even as he personally aims to pursue smaller, compounding ideas rather than chasing another Nobel-scale leap.

2. AI Agents Aren’t Ready for Consumer-Facing Work—But They Can Excel at Internal Processes (Harvard Business Review Digital Article)

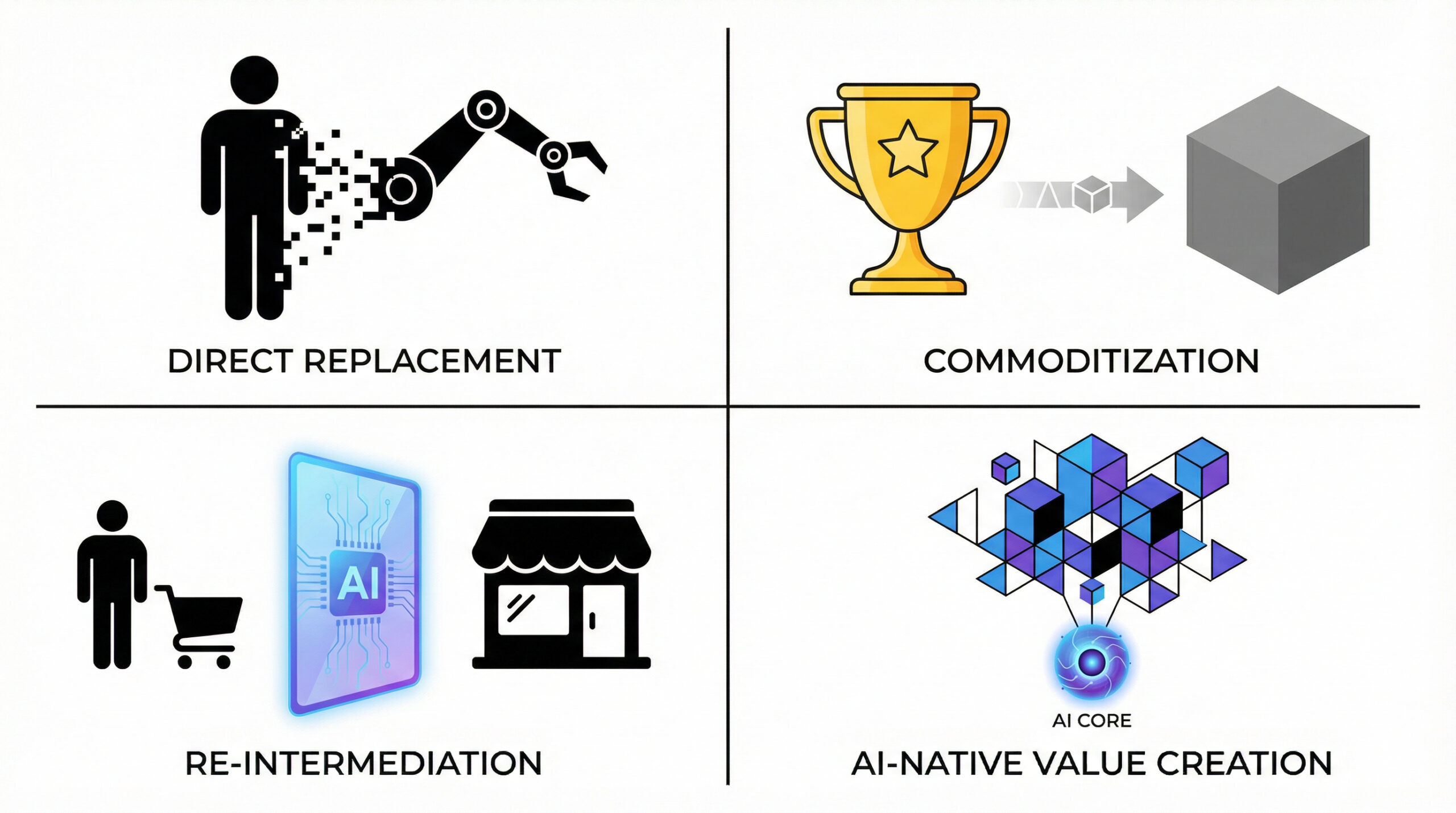

The article argues that while generative AI is overhyped and consumer use is still occasional rather than habitual, a real near-term breakthrough is coming from “agentic” multi-agent workflows inside companies, not from flashy customer-facing bots. The tech has evolved from brittle prompting to RAG to layered agent systems that split work into small tasks, validate outputs, and correct errors, making them reliable enough for structured, repetitive backend processes where humans can stay in the loop. Customer-facing uses remain a poor fit because real-world interactions are messy, unstructured, and intolerant of hallucinations, and consumers worry about privacy and security. A case with a European internet provider shows the payoff of starting internally: integrating many systems and giving technicians AI-generated briefs, recommendations, natural-language querying, and automated routine actions cut resolution time by 60% and saved over €1M annually. Key implementation lessons are that you should prototype fast before scaling, find root causes by working with frontline operators (not just managers), expect organizational friction to be harder than the tech, and build strong guardrails because models will hallucinate. Overall, “agentic transformation” resembles Lean: detailed, step-by-step process redesign that yields incremental gains at first, requires new internal roles (data/context/AI “black belts”), tight governance, and a focus on use cases with <1-year paybacks as tools evolve—setting up companies to continuously reinvent themselves over the coming decade.

3. As AI Changes Work, CEOs Must Change How Work Happens (BCG)

AI is rapidly rewriting the “DNA” of work, so CEOs can’t just layer tools onto old processes—they must redefine roles, performance, and workflows around human-AI collaboration. Most employees already use AI but feel undertrained and unclear about emerging AI agents, while firms face a growing shortage of digital talent, meaning readiness and reskilling are now strategic imperatives. Leaders should partner closely with CHROs, CIOs, and other execs to build an enterprise talent alliance, launch large-scale upskilling and internal talent-sharing programs, and redesign the talent pyramid so humans focus more on oversight, coaching, integration, and uniquely human skills like critical thinking and creativity. To win scarce AI talent, companies must offer meaningful, flexible work and clear learning paths, and CEOs should communicate transparently about why AI adoption matters to build trust and reduce fear.

Innovation Radar

1. AI Model Releases and Advancements

Claude Opus 4.5 is Anthropic’s new flagship model, launching across apps/API with cheaper pricing, top-tier coding/agent performance, improved everyday abilities, stronger safety, and platform/product upgrades like effort control and longer chats (Anthropic).

Tencent’s HunyuanOCR is a compact 1B-parameter, end-to-end vision-language OCR model that unifies spotting, parsing, extraction, VQA, and translation across 100+ languages while matching or beating larger VLMs on OCR benchmarks (Mark Tech Post).

FLUX.2 is Black Forest Labs’ next-gen visual intelligence model family that delivers highly realistic, prompt-faithful, multi-reference image generation and 4MP editing via both open-weight checkpoints and production APIs (BFL).

DeepSeek has open-sourced DeepSeek-Math-V2, an IMO gold-level math model that trains “self-verifiable” reasoning by combining proof generation with rigorous automated verification and honest self-checking (36Kr).

Fara-7B is Microsoft’s open-weight 7B-parameter computer-use agent trained on large-scale synthetic web-interaction trajectories to act directly from screenshots (without accessibility trees), achieving state-of-the-art small-model performance on real web tasks while emphasizing on-device efficiency, user-controlled safety, and sandboxed experimentation (Microsoft).

2. AI Tools and Features

Gemini is rolling out to Android Auto, upgrading voice control into a more natural, conversational assistant that helps with navigation, messaging, productivity, music, and live chat while you drive (Google). Google’s new U.S. “Let Google call” feature lets you search “near me,” answer a few questions, and have Google call local stores then send you a summary by text or email (Google).

Exa 2.1 launches with 10× more compute to greatly boost the quality and speed of Exa Fast/Auto/Deep search APIs and its MCP deep-search tool, making Fast sub-500ms and Deep best-in-market for agentic search (Exa).