Highlights

Top Insights

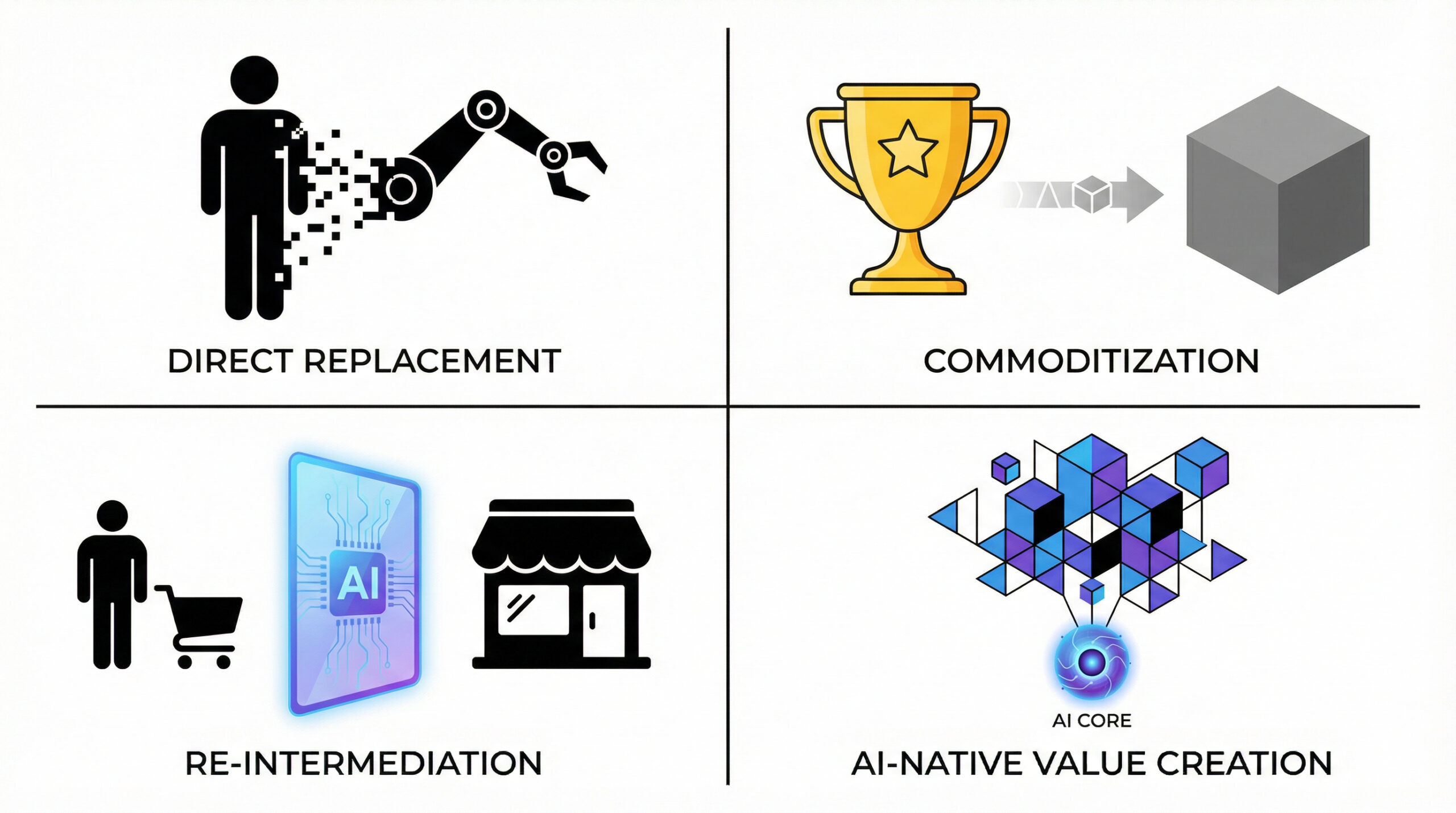

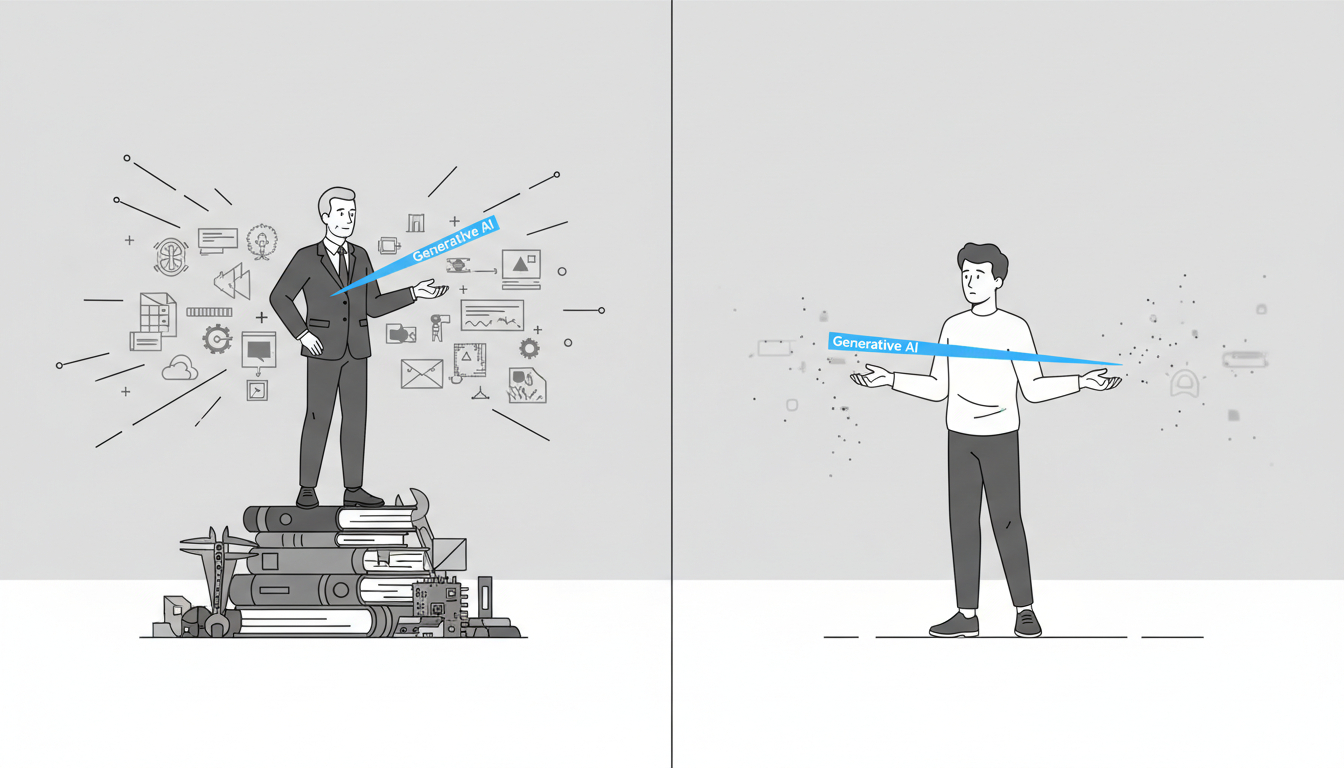

1. Two companies look similar. One company treats technology as a support tool. The other treats it as an organizational role: almost like a digital manager. The second approach produces faster decisions, flatter structures, and greater scalability.

2. Tool mindset: Technology digitizes existing workflows (e.g., moving approvals online) but leaves decision rights, hierarchy, and processes largely unchanged.

Role mindset: Technology actively does managerial work, collecting data, analyzing trade-offs, issuing tasks, and coordinating across teams.

3. Role mindset: Employees collaborate through technology, which dynamically connects the right people, data, and tasks. For instance, instead of routing proposals through departments for approval, the second company forms cross-functional task forces from the start, with the system coordinating feasibility checks and workflow design in parallel.

4. Tool mindset: Managers direct work, approve decisions, and resolve exceptions.

Role mindset: Technology handles coordination and analysis; managers focus on strategy, resource allocation, and algorithm and system governance.

Source: One Company Used Tech as a Tool. Another Gave It a Role. Which Did Better? (HBR Digital Article)

Top News

1. Microsoft announced Copilot Checkout and Brand Agents.

2. Amazon has launched Alexa.com.

3. Google unveiled Gemini Enterprise for Customer Experience, introducing AI shopping and ordering agents for retailers. Google also announced the Universal Commerce Protocol, an open standard to enable AI agents to handle end-to-end shopping and checkout.

4. Google has launched a beta of Gemini Personal Intelligence, allowing users to securely connect Gmail and Google Photos.

5 Anthropic announced Cowork, a feature that lets users give Claude controlled access to folders and tools.

6. Anthropic announced HIPAA-ready Claude for Healthcare.

Additional Insights

1. Climbing Bots, Smart Bricks, Super-Bright TVs: The Best Stuff From Tech’s Biggest Gadget Show (WSJ)

CES 2026 showcased a near-future tech landscape dominated by tiny yet powerful accessories, ultrabright next-gen displays, pervasive AI, and an explosion of increasingly mobile robots, with standout themes including miniature phone gear packing serious storage and power, Lego’s sensor-rich smart bricks blending physical play with digital interaction, micro RGB TVs promising unprecedented brightness and color at high prices, AI spreading from TVs to desks and wearables to simplify settings, translate speech, and capture thoughts, and home robots evolving fastest in practical niches like vacuums and novelty companions while humanoid helpers remain more concept than reality.

2. The physical AI deployment gap (a16z)

The essay argues that despite rapid advances in robotics research—such as vision-language-action models, sim-to-real transfer, and generalist capabilities—there is a widening gap between impressive lab demos and real-world deployment, driven by challenges like distribution shift, stringent reliability requirements, latency constraints, integration with enterprise systems, safety certification, and maintenance. It emphasizes that production environments demand worst-case reliability, low-latency edge models, and seamless operational integration, which current research systems rarely meet. The gap is compounded because failures increase costs, limit scale, and reduce data for improvement. Closing it will require not just better models, but new infrastructure for deployment-time data collection, reliability engineering for learned systems, efficient edge-deployable architectures, robust integration tooling, and updated safety frameworks. Progress is likely to be incremental and ecosystem-driven rather than a single breakthrough, with strategic implications for global competitiveness in robotics.

3. 2026: This is AGI (Sequoia)

The authors argue that AGI has effectively arrived in functional terms, defined not by a strict technical benchmark but by the practical ability of AI systems to autonomously “figure things out” over long time horizons, citing emerging long-horizon agents—especially coding and task-oriented agents—as evidence. They contend that recent advances in reasoning models and agent scaffolding now allow AI to iterate, make decisions, recover from errors, and achieve real-world outcomes in ways previously limited to humans, with progress following an exponential curve. This shift transforms AI applications from conversational “talkers” into autonomous “doers” that can be hired like colleagues across domains such as software, law, medicine, and recruiting. For founders, the implication is a fundamental change in how work is productized, managed, and sold, moving from tools that save time to agents that perform sustained work, making previously ambitious, long-term visions suddenly achievable in the near term.

4. Building leaders in the age of AI (McKinsey)

While AI can dramatically accelerate tasks like writing, analysis, and coordination, it cannot replace the fundamentally human work of leadership. It emphasizes that effective leaders in the AI era must shift from command-and-control to creating context, using AI as a thinking partner rather than a substitute for judgment. The authors highlight three uniquely human leadership responsibilities: setting aspirational goals that mobilize people, exercising accountable judgment aligned with values, and fostering nonlinear creativity that enables breakthrough outcomes. They also stress the importance of identifying and developing high-potential leaders based on intrinsic qualities such as resilience, learning agility, empathy, and collaboration, rather than relying solely on credentials. To build the next generation of leaders, organizations should clarify desired leadership attributes, embed continuous learning cultures, invest in trust and servant leadership, and help leaders protect their time and energy for critical moments.

5. Meet the new biologists treating LLMs like aliens (MIT Technology Review)

Researchers are increasingly treating large language models as complex, evolving organisms rather than deterministic software, using tools inspired by biology and neuroscience to understand their behavior. It argues that because LLMs are grown through training rather than explicitly designed, their internal mechanisms are opaque even to their creators, creating risks around trust, reliability, and alignment. Through examples from Anthropic, OpenAI, and Google DeepMind, it highlights discoveries showing that models can contain loosely connected “personas,” process true and false statements through different internal pathways, and exhibit broad misbehavior when trained on narrow undesirable tasks. The article contrasts fine-grained mechanistic interpretability with chain-of-thought monitoring, showing how each offers partial but valuable insight into what models are doing and why they sometimes behave unexpectedly. It concludes that while full understanding may be impossible and fleeting as models rapidly evolve, even limited interpretability can replace misleading folk theories with clearer, more grounded ways of thinking about what these systems are and how humans should coexist with them.

6. 8 takeaways from CES ‘26 (IDEO)

The piece reflects on CES through a human-centered design lens, arguing that designing for real human needs and outcomes matters more than technological novelty. It questions whether AI-driven products, such as humanoid robots, truly solve meaningful problems or simply showcase technical ambition, and suggests rethinking systems and environments rather than mimicking human behavior. The article highlights a growing desire for simpler, more tangible technologies that restore user agency, alongside rising concerns about consent, data ownership, and trust as bodies and homes become platforms for sensing. It notes encouraging progress in accessibility and health tech, while emphasizing that empowerment depends on design choices around control and dignity. Joy, play, and delight are presented as critical to adoption and trust, often serving as gateways for new technologies to enter everyday life. The authors also stress the importance of infrastructure, ecosystems, and compatibility with existing behaviors for long-term success, while expressing disappointment at reduced emphasis on sustainability and the broader systems of brand and trust that support hardware innovation.

7. Scaling long-running autonomous coding (Cursor)

The piece shares insights from experiments running large numbers of autonomous coding agents over long periods and reflects on what worked and failed. It argues that single agents are insufficient for complex projects and that naïve parallelization with flat coordination leads to bottlenecks, risk aversion, and stalled progress. A hierarchical structure separating planners and workers proved far more effective, enabling hundreds of agents to collaborate on massive codebases for weeks with minimal conflict. The authors highlight that simplicity, appropriate structure, and especially careful prompting matter more than elaborate coordination mechanisms. They also note that different models excel at different roles and that long-running autonomous work benefits from models better at maintaining focus. Overall, the post suggests that scaling autonomous coding with many agents is feasible and promising, though coordination and efficiency remain open challenges.